What is the Mixed Reality Toolkit

MRTK-Unity is a Microsoft-driven project that provides a set of components and features, used to accelerate cross-platform MR app development in Unity. Here are some of its functions:

- Provides the cross-platform input system and building blocks for spatial interactions and UI.

- Enables rapid prototyping via in-editor simulation that allows you to see changes immediately.

- Operates as an extensible framework that provides developers the ability to swap out core components.

- Supports a wide range of platforms, including

- Microsoft HoloLens

- Microsoft HoloLens 2

- Windows Mixed Reality headsets

- OpenVR headsets (HTC Vive / Oculus Rift)

- Ultraleap Hand Tracking

- Mobile devices such as iOS and Android

Getting started with MRTK

If you're new to MRTK or Mixed Reality development in Unity, we recommend you start at the beginning of our Unity development journey in the Microsoft Docs. The Unity development journey is specifically tailored to walk new developers through the installation, core concepts, and usage of MRTK.

| IMPORTANT: The Unity development journey currently uses MRTK version 2.4.0 and Unity 2019.4. |

|---|

If you're an experienced Mixed Reality or MRTK developer, check the links in the next section for the newest packages and release notes.

Documentation

Release Notes |

MRTK Overview |

Feature Guides |

API Reference |

|---|

Build status

| Branch | CI Status | Docs Status |

|---|---|---|

mrtk_development |

Required software

Windows SDK 18362+ Windows SDK 18362+ |

Unity 2018.4.x Unity 2018.4.x |

Visual Studio 2019 Visual Studio 2019 |

Emulators (optional) Emulators (optional) |

|---|---|---|---|

| To build apps with MRTK v2, you need the Windows 10 May 2019 Update SDK. To run apps for immersive headsets, you need the Windows 10 Fall Creators Update. |

The Unity 3D engine provides support for building mixed reality projects in Windows 10 | Visual Studio is used for code editing, deploying and building UWP app packages | The Emulators allow you to test your app without the device in a simulated environment |

Feature areas

UX building blocks

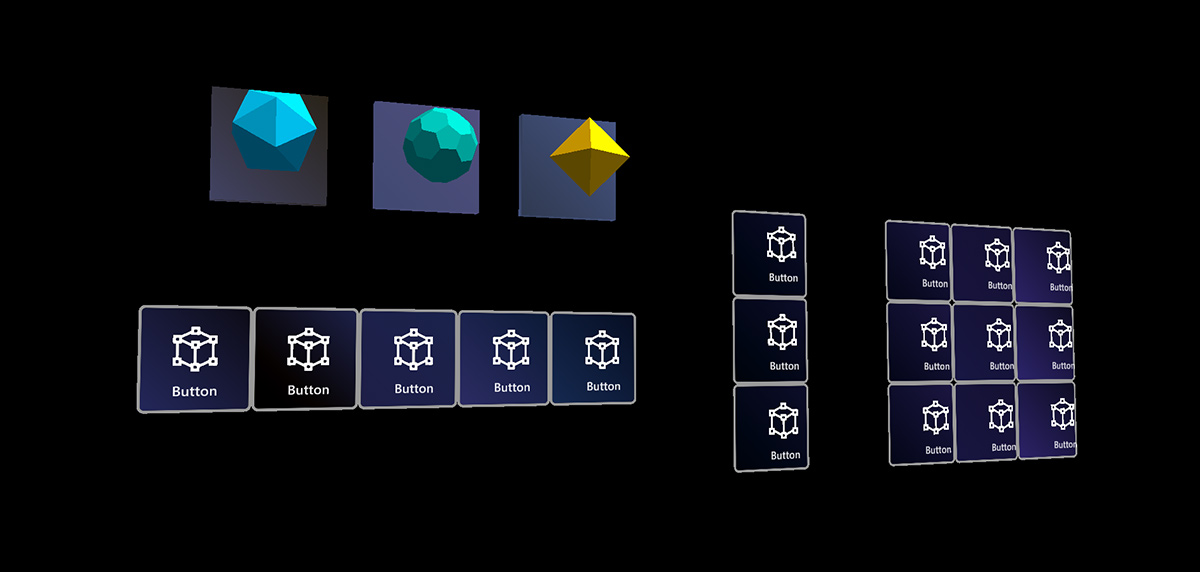

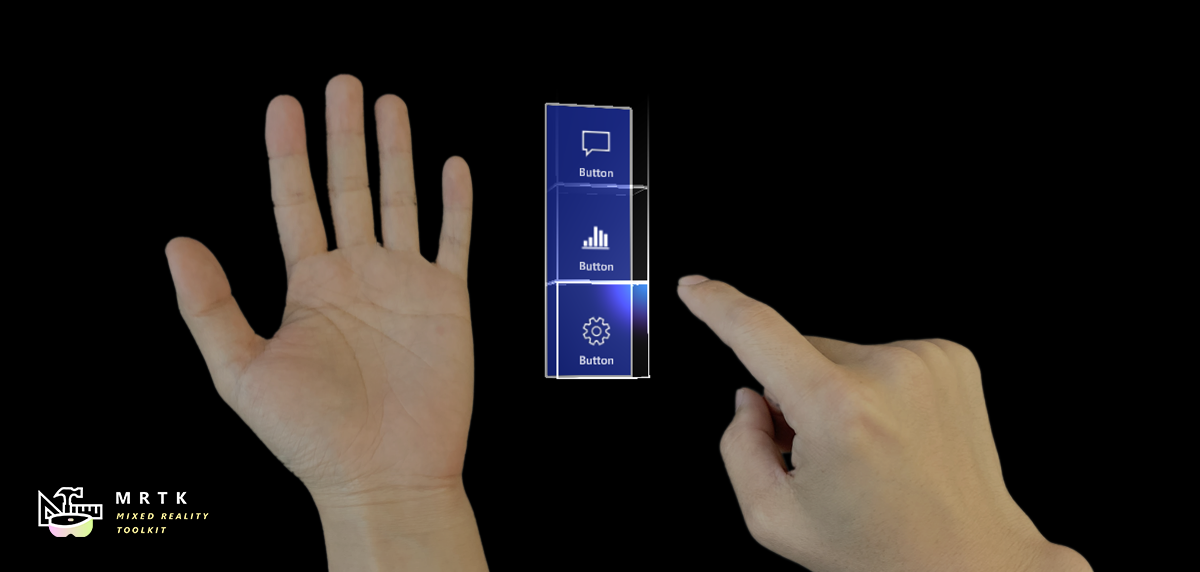

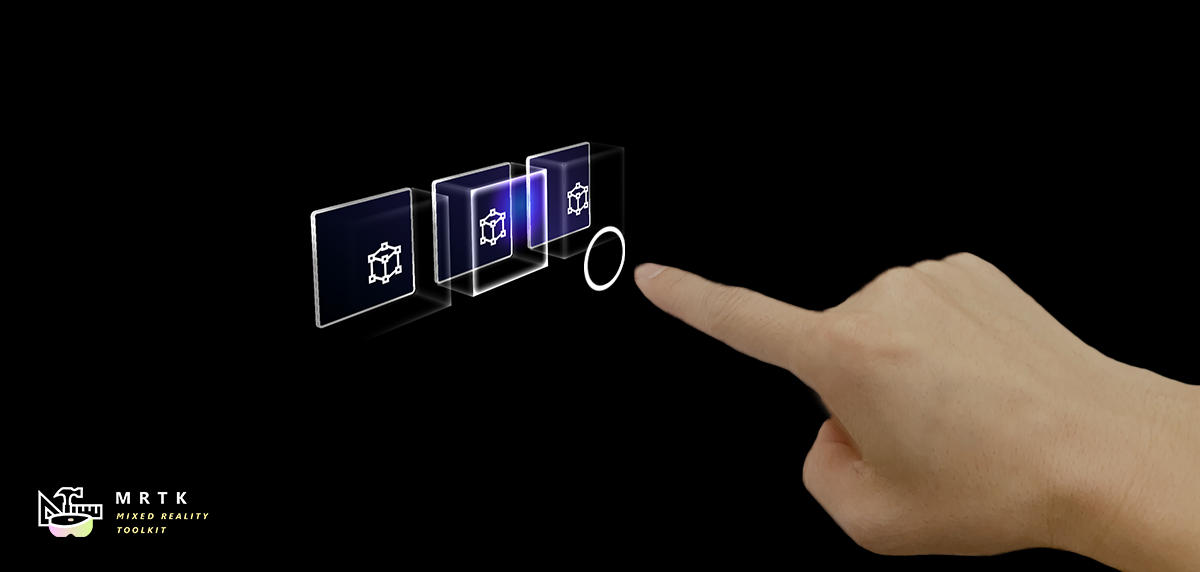

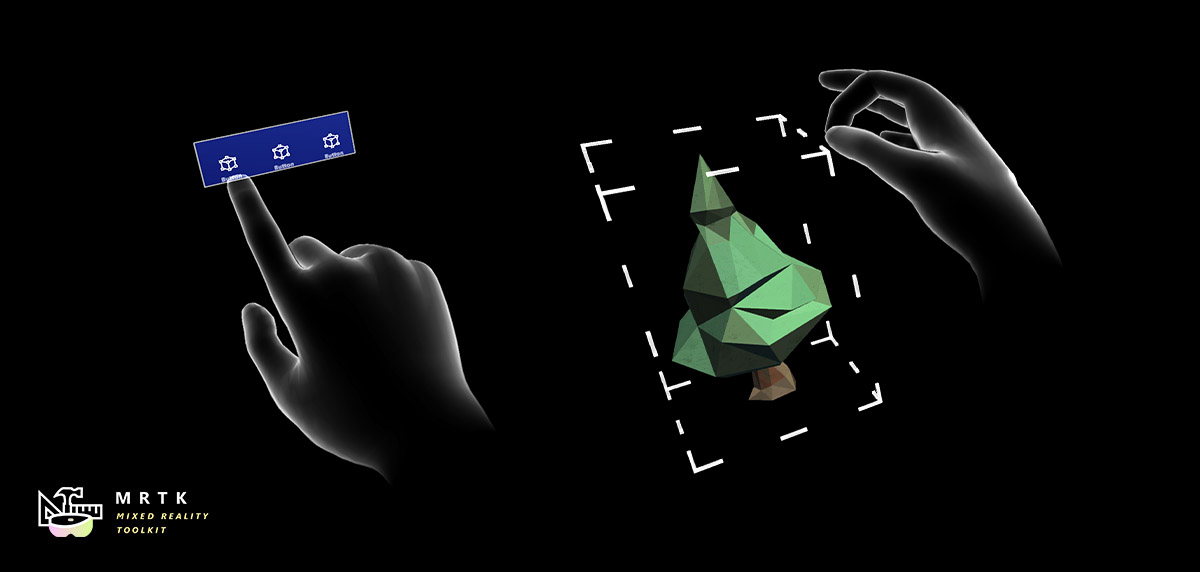

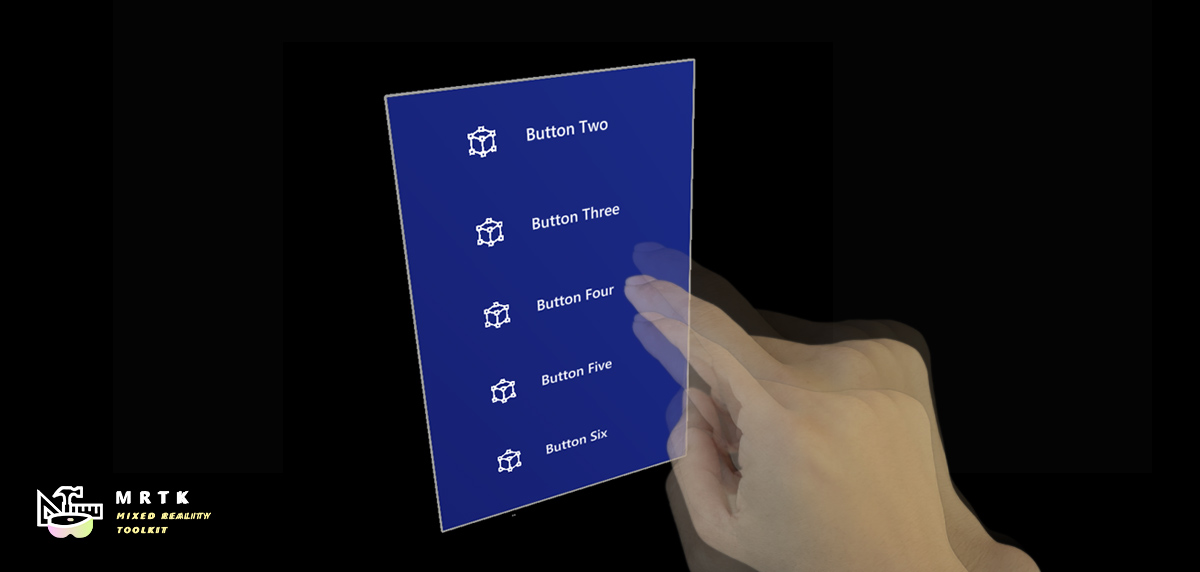

Button Button |

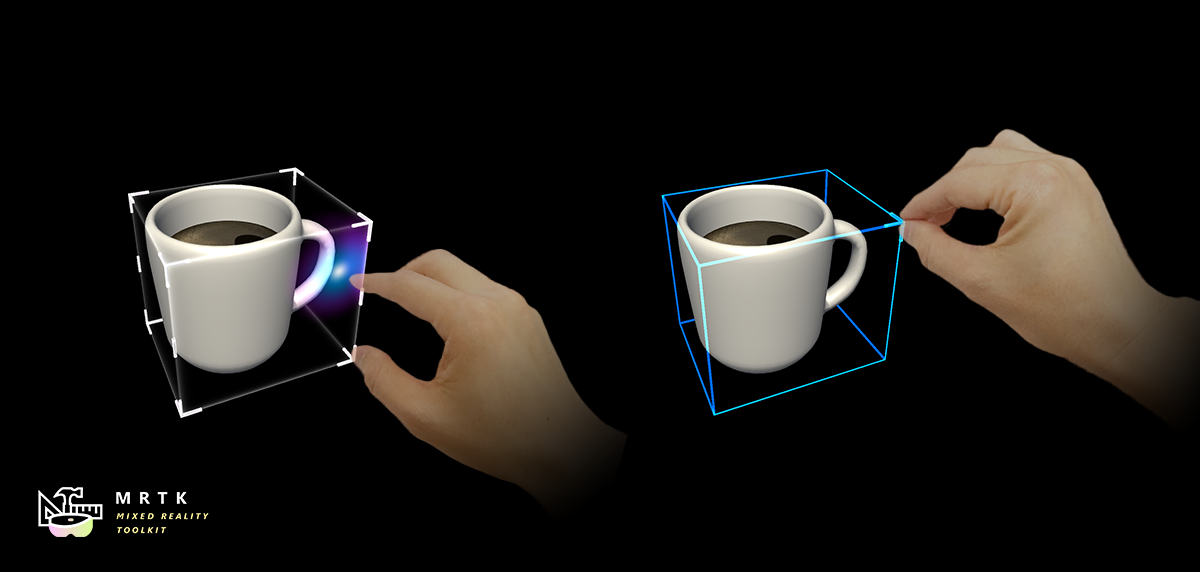

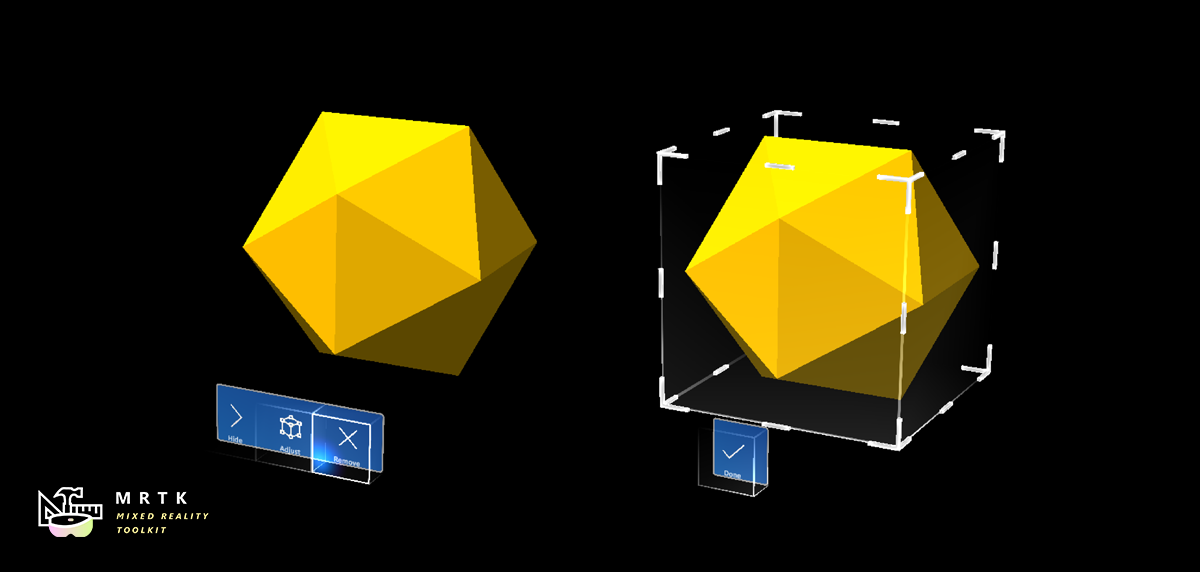

Bounds Control Bounds Control |

Object Manipulator Object Manipulator |

|---|---|---|

| A button control which supports various input methods, including HoloLens 2's articulated hand | Standard UI for manipulating objects in 3D space | Script for manipulating objects with one or two hands |

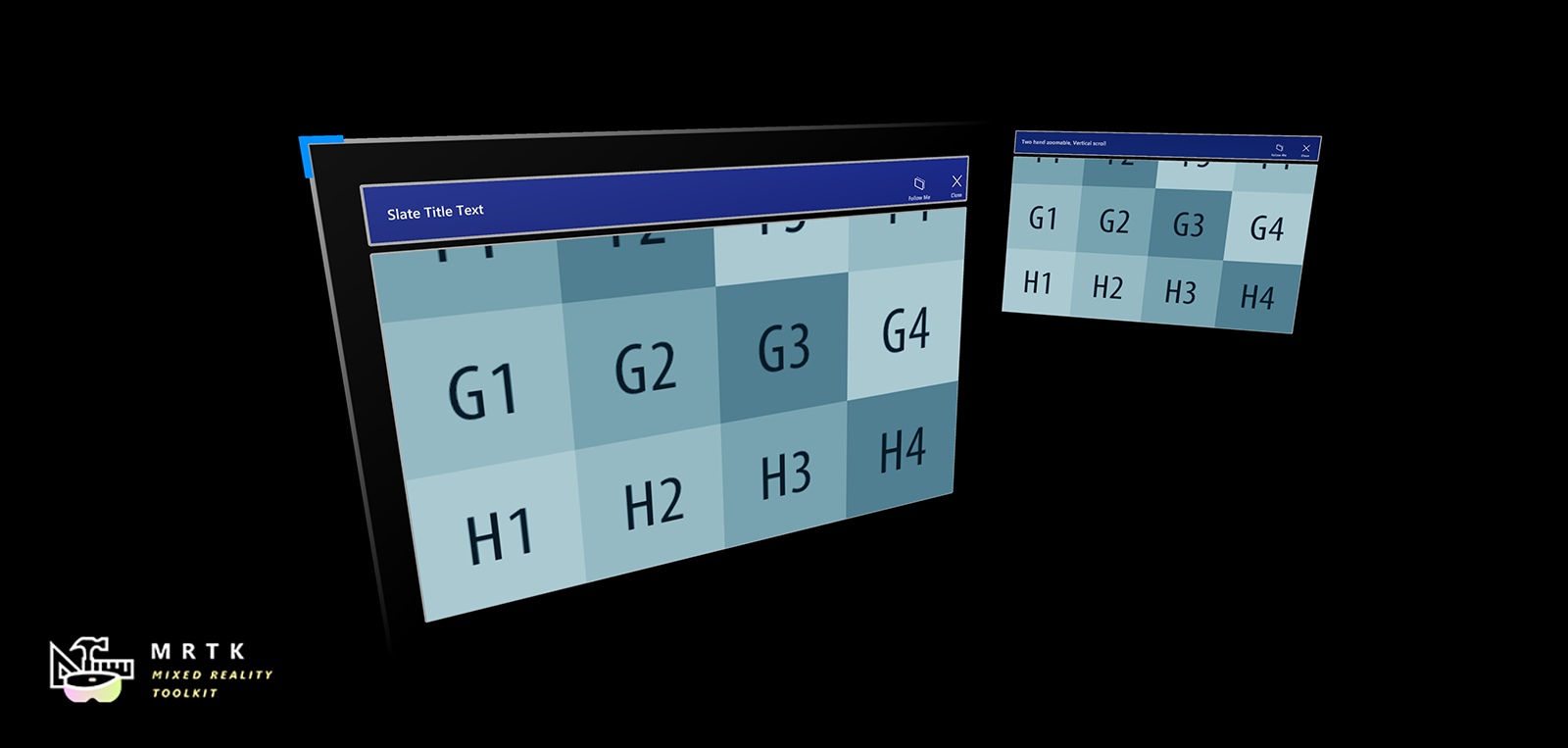

Slate Slate |

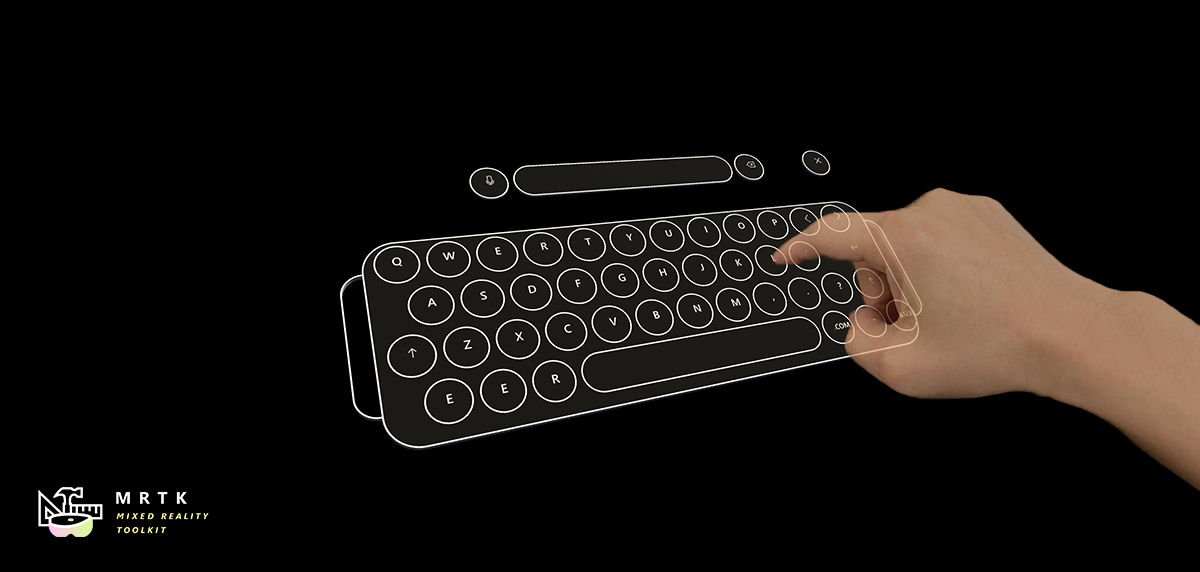

System Keyboard System Keyboard |

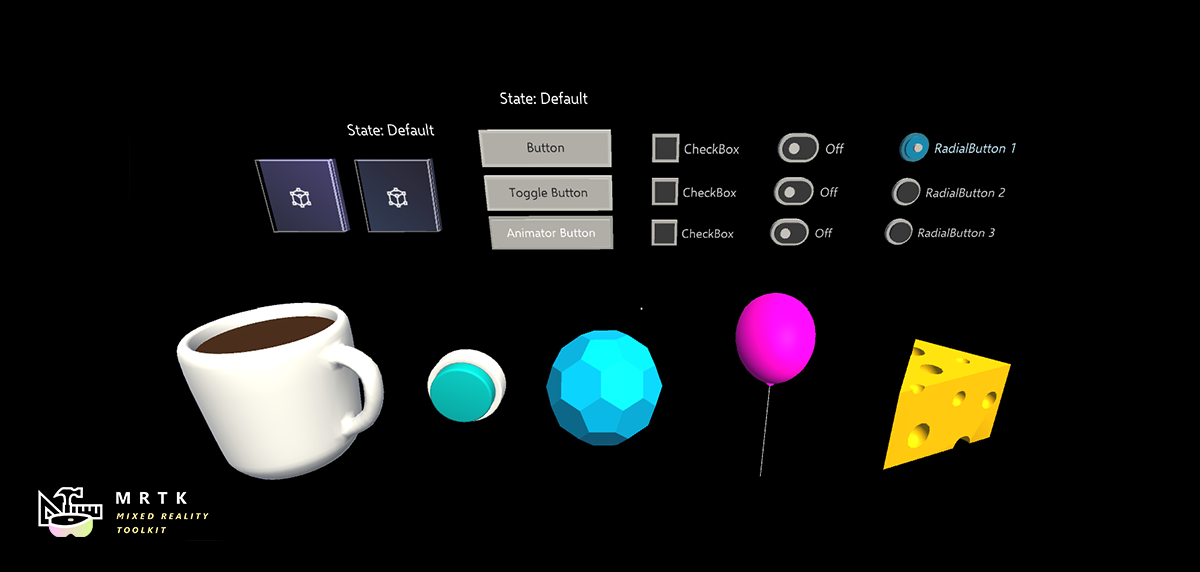

Interactable Interactable |

| 2D style plane which supports scrolling with articulated hand input | Example script of using the system keyboard in Unity | A script for making objects interactable with visual states and theme support |

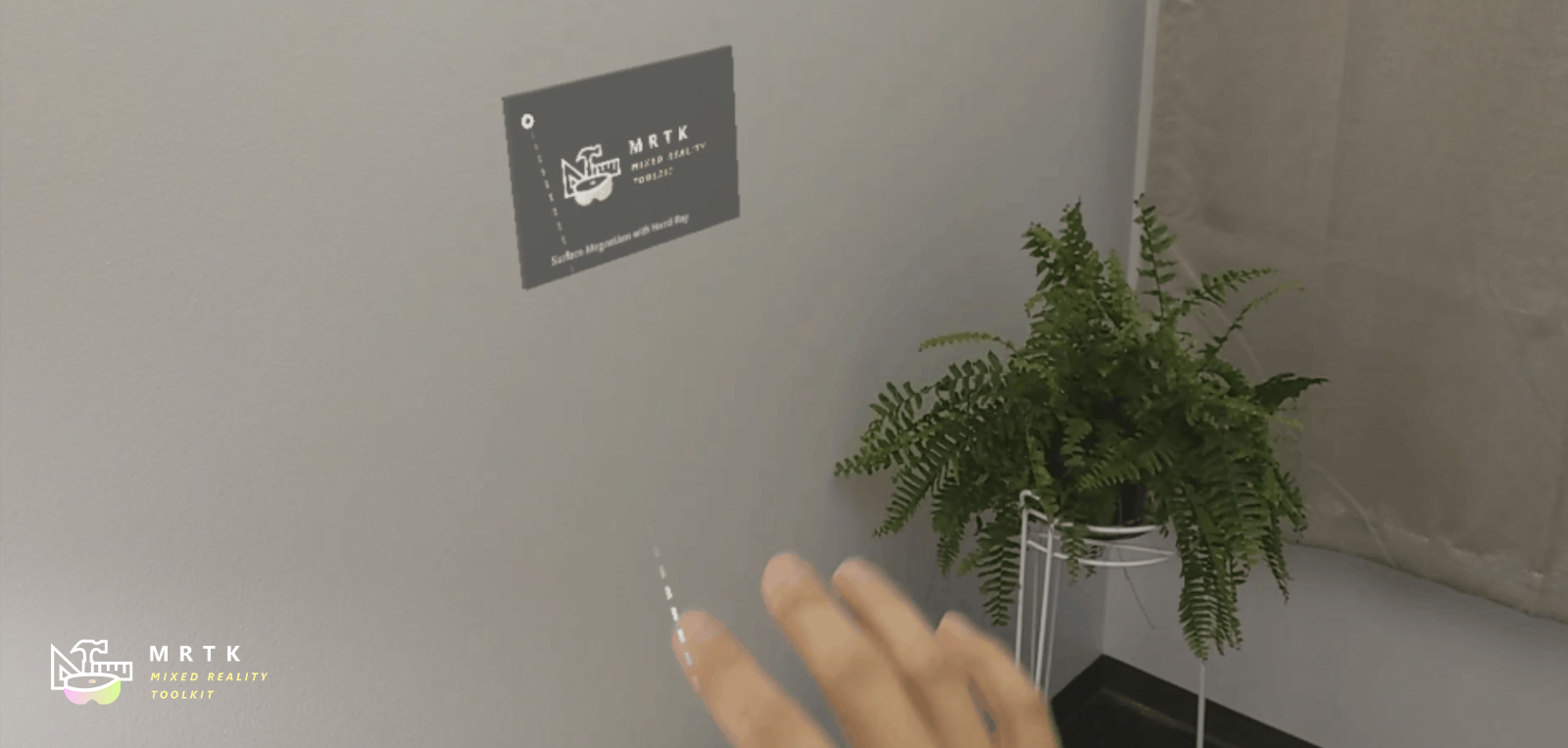

Solver Solver |

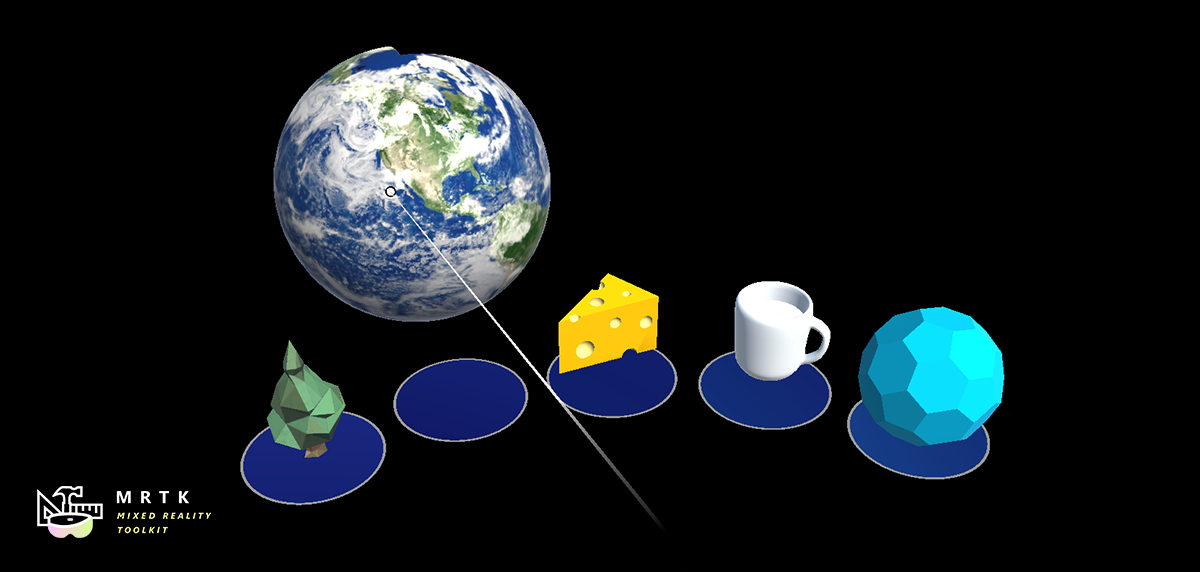

Object Collection Object Collection |

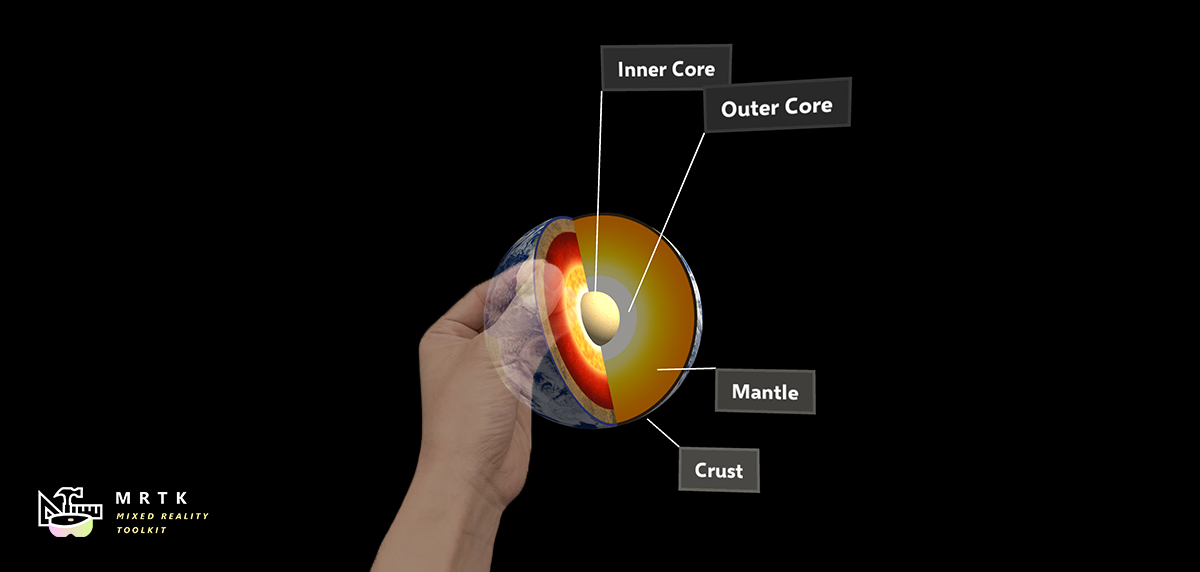

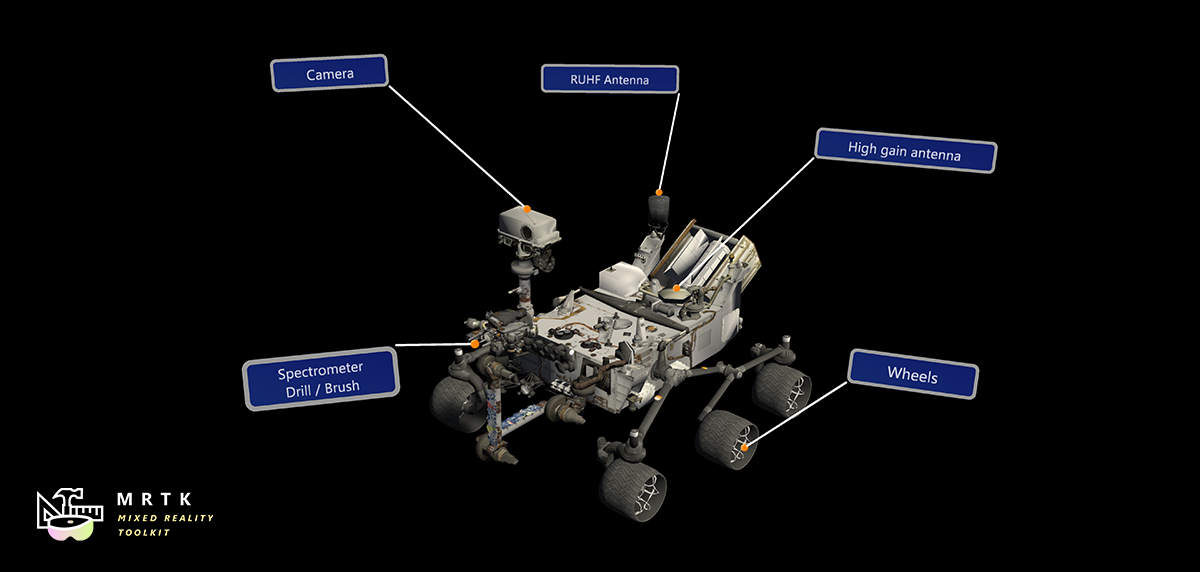

Tooltip Tooltip |

| Various object positioning behaviors such as tag-along, body-lock, constant view size and surface magnetism | Script for laying out an array of objects in a three-dimensional shape | Annotation UI with a flexible anchor/pivot system, which can be used for labeling motion controllers and objects |

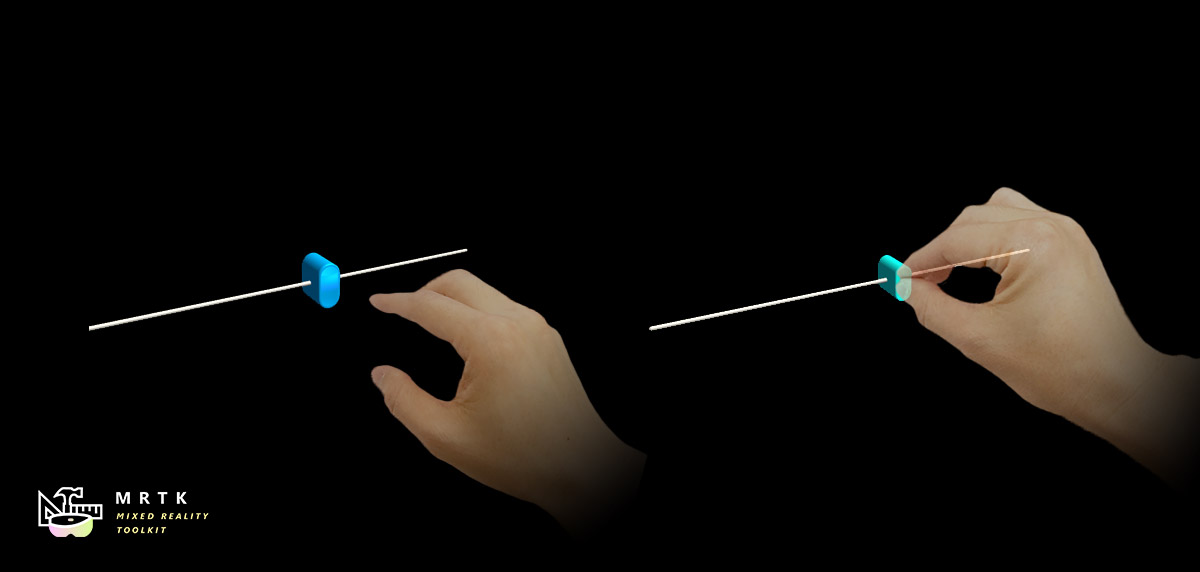

Slider Slider |

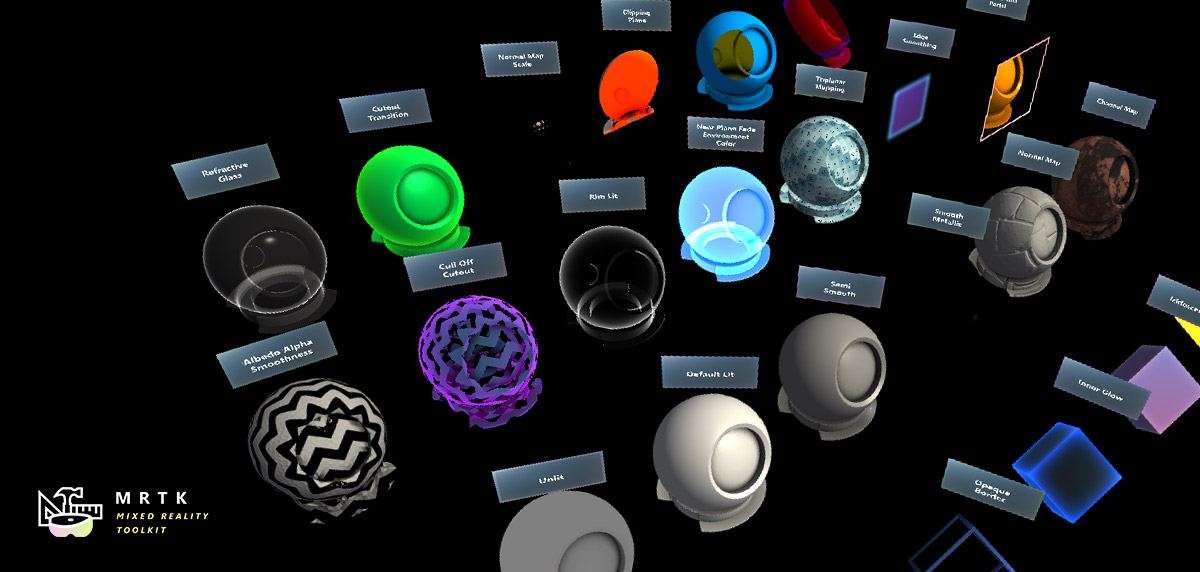

MRTK Standard Shader MRTK Standard Shader |

Hand Menu Hand Menu |

| Slider UI for adjusting values supporting direct hand tracking interaction | MRTK's Standard shader supports various Fluent design elements with performance | Hand-locked UI for quick access, using the Hand Constraint Solver |

App Bar App Bar |

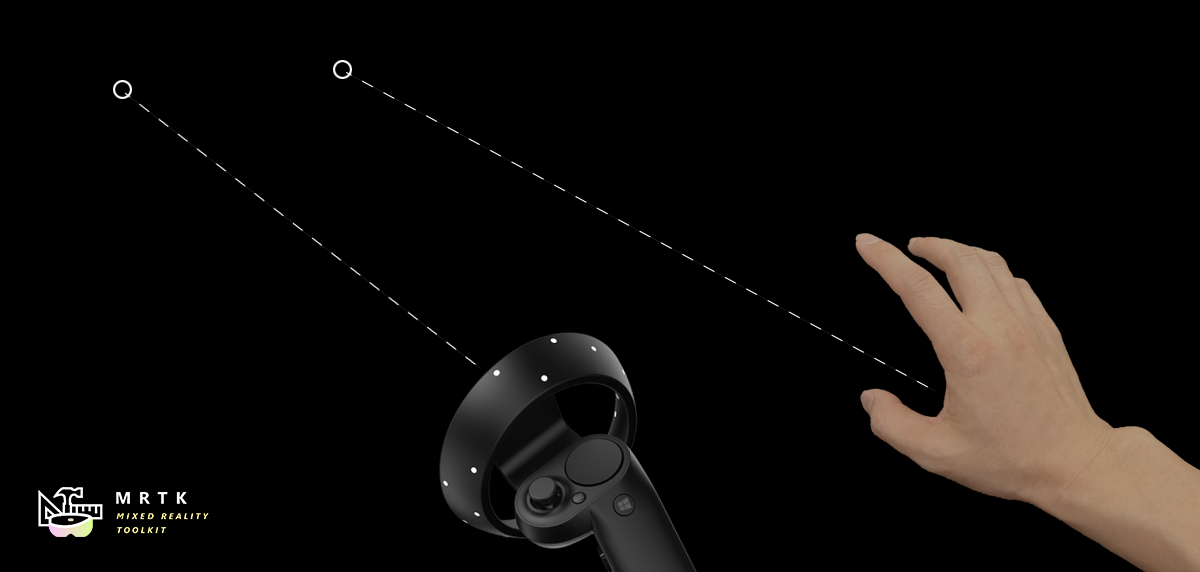

Pointers Pointers |

Fingertip Visualization Fingertip Visualization |

| UI for Bounds Control's manual activation | Learn about various types of pointers | Visual affordance on the fingertip which improves the confidence for the direct interaction |

Near Menu Near Menu |

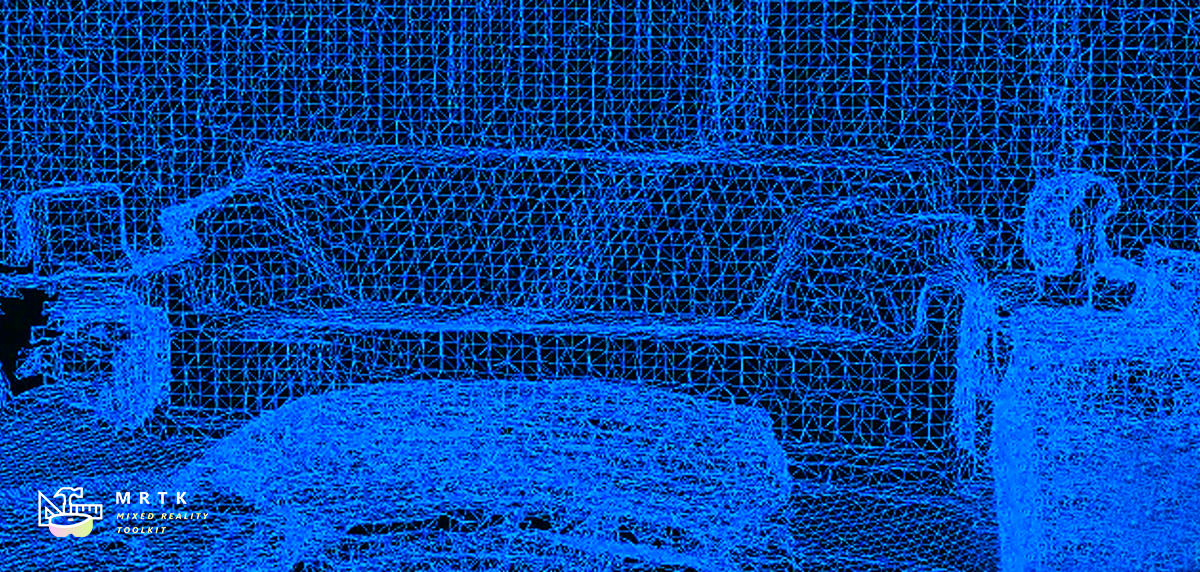

Spatial Awareness Spatial Awareness |

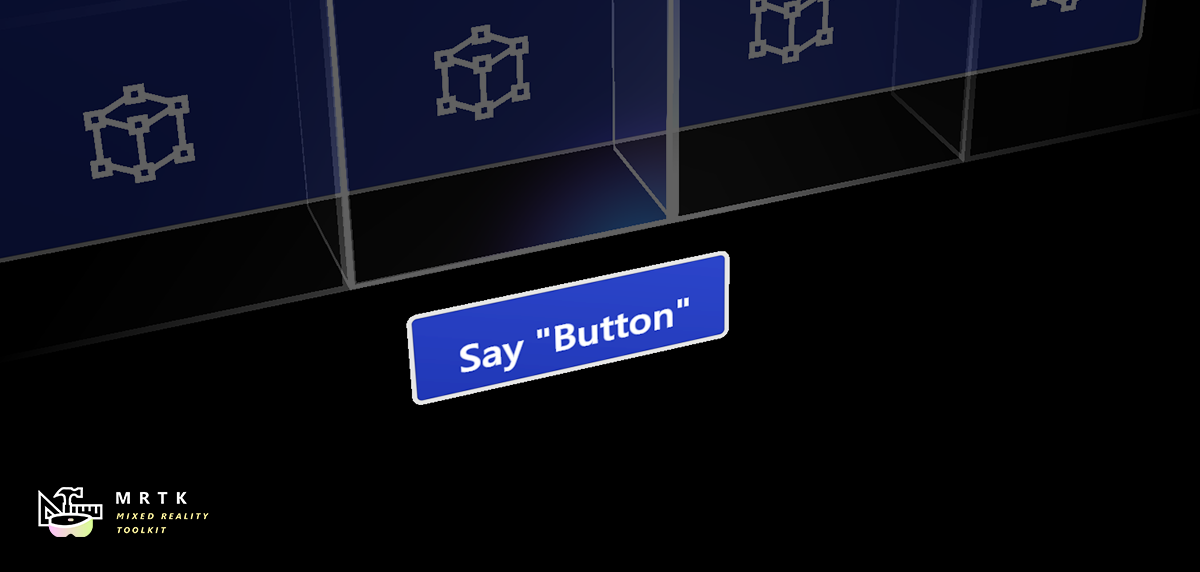

Voice Command / Dictation Voice Command / Dictation |

| Floating menu UI for the near interactions | Make your holographic objects interact with the physical environments | Scripts and examples for integrating speech input |

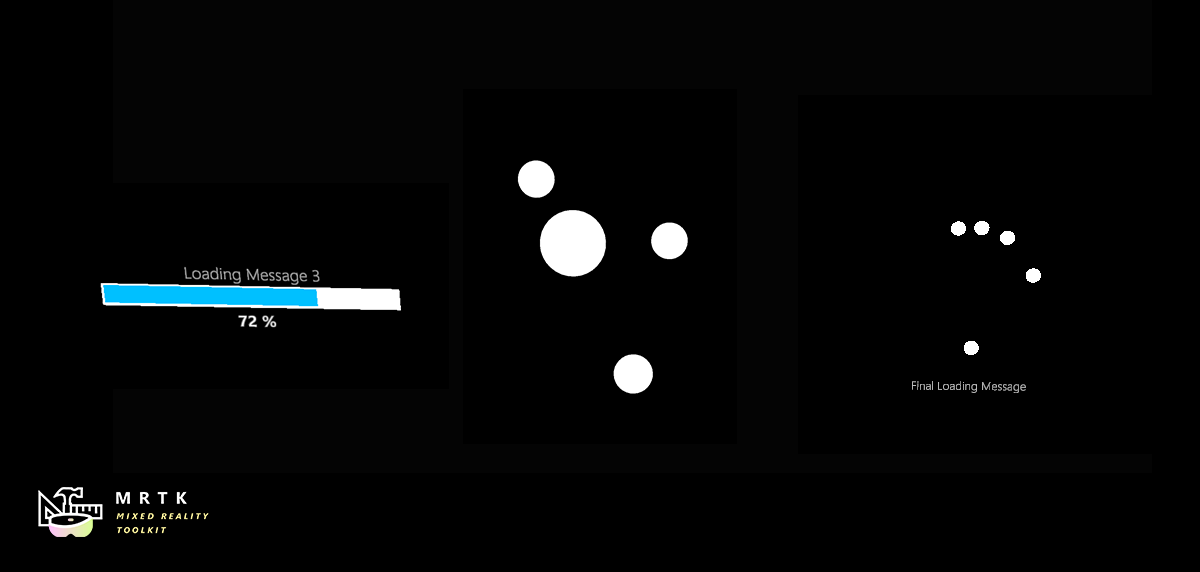

Progress Indicator Progress Indicator |

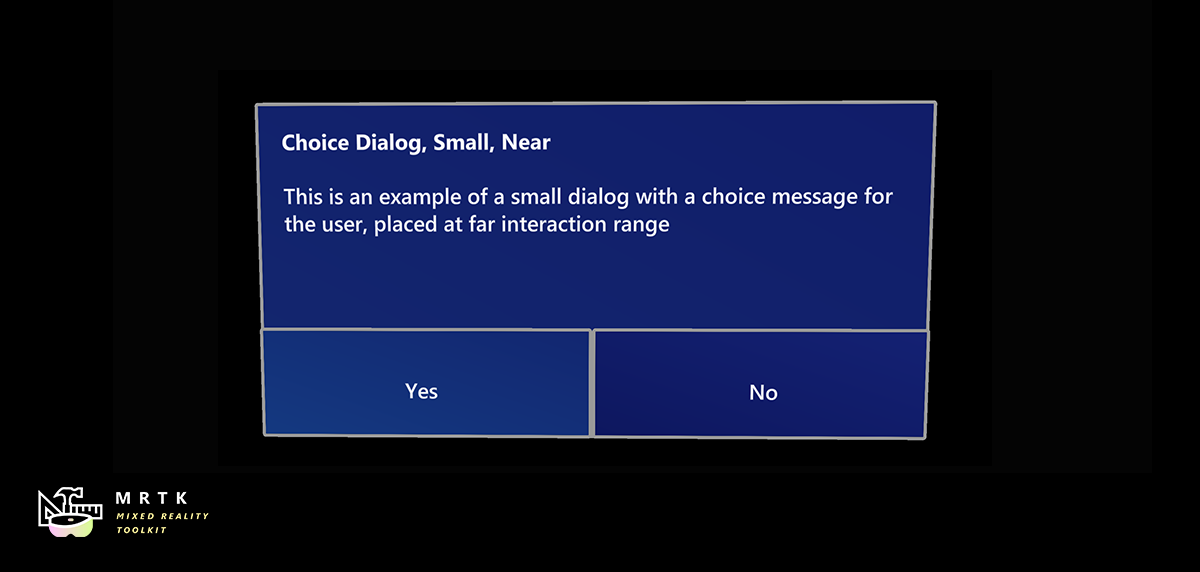

Dialog [Experimental] Dialog [Experimental] |

Hand Coach [Experimental] Hand Coach [Experimental] |

| Visual indicator for communicating data process or operation | UI for asking for user's confirmation or acknowledgement | Component that helps guide the user when the gesture has not been taught |

Hand Physics Service [Experimental] Hand Physics Service [Experimental] |

Scrolling Collection Scrolling Collection |

Dock [Experimental] Dock [Experimental] |

| The hand physics service enables rigid body collision events and interactions with articulated hands | An Object Collection that natively scrolls 3D objects | The Dock allows objects to be moved in and out of predetermined positions |

| Combine eyes, voice and hand input to quickly and effortlessly select holograms across your scene | Learn how to auto-scroll text or fluently zoom into focused content based on what you are looking at | Examples for logging, loading and visualizing what users have been looking at in your app |

Tools

| Automate configuration of Mixed Reality projects for performance optimizations | Analyze dependencies between assets and identify unused assets | Configure and execute an end-to-end build process for Mixed Reality applications | Record and playback head movement and hand tracking data in editor |

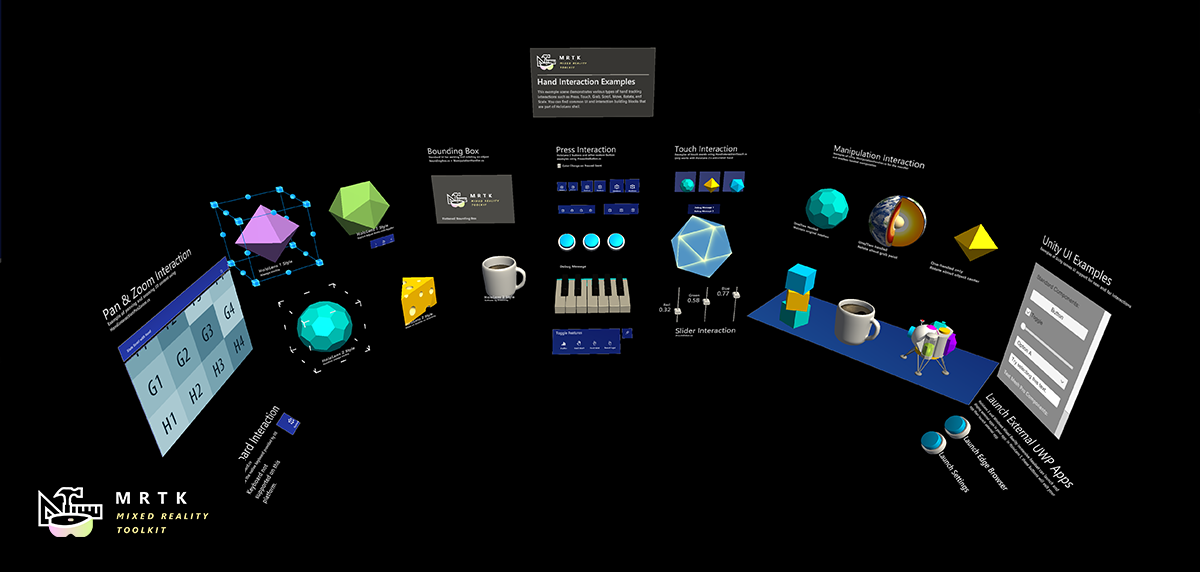

Example scenes

Explore MRTK's various types of interactions and UI controls in this example scene.

You can find other example scenes under Assets/MixedRealityToolkit.Examples/Demos folder.

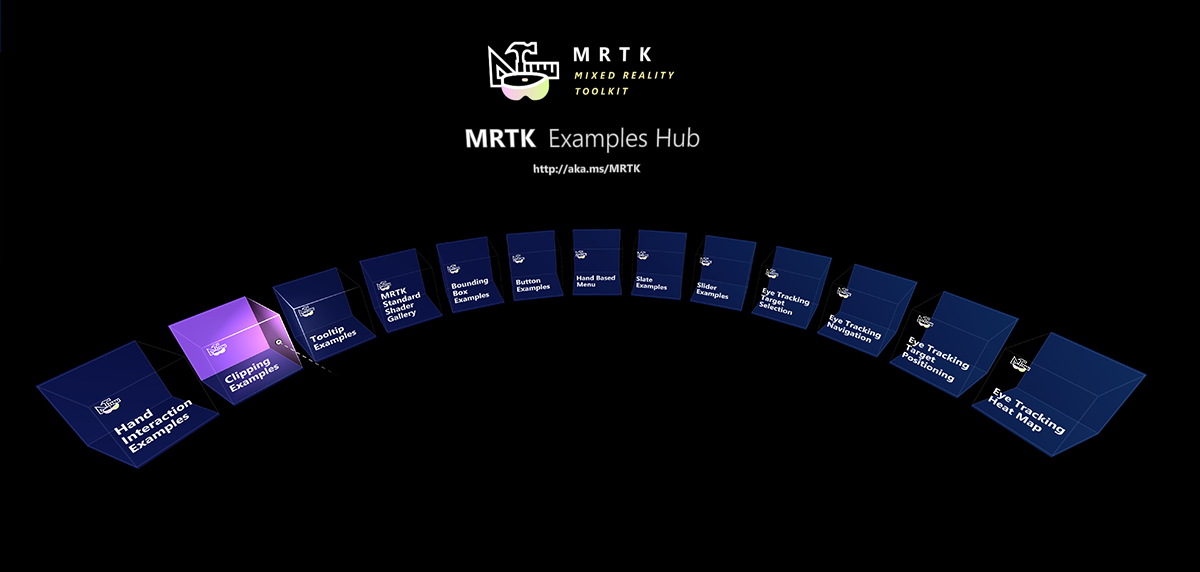

MRTK examples hub

With the MRTK Examples Hub, you can try various example scenes in MRTK. You can find pre-built app packages for HoloLens(x86), HoloLens 2(ARM), and Windows Mixed Reality immersive headsets(x64) under Release Assets folder. Use the Windows Device Portal to install apps on HoloLens. On HoloLens 2, you can download and install MRTK Examples Hub through the Microsoft Store app.

See Examples Hub README page to learn about the details on creating a multi-scene hub with MRTK's scene system and scene transition service.

Sample apps made with MRTK

|

|

|

|---|---|---|

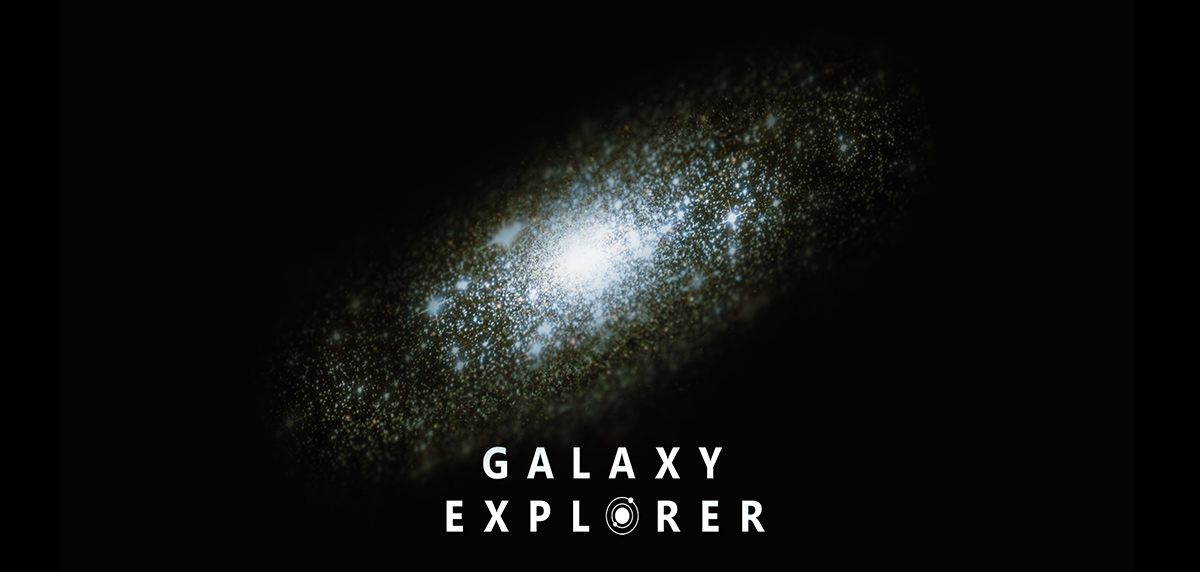

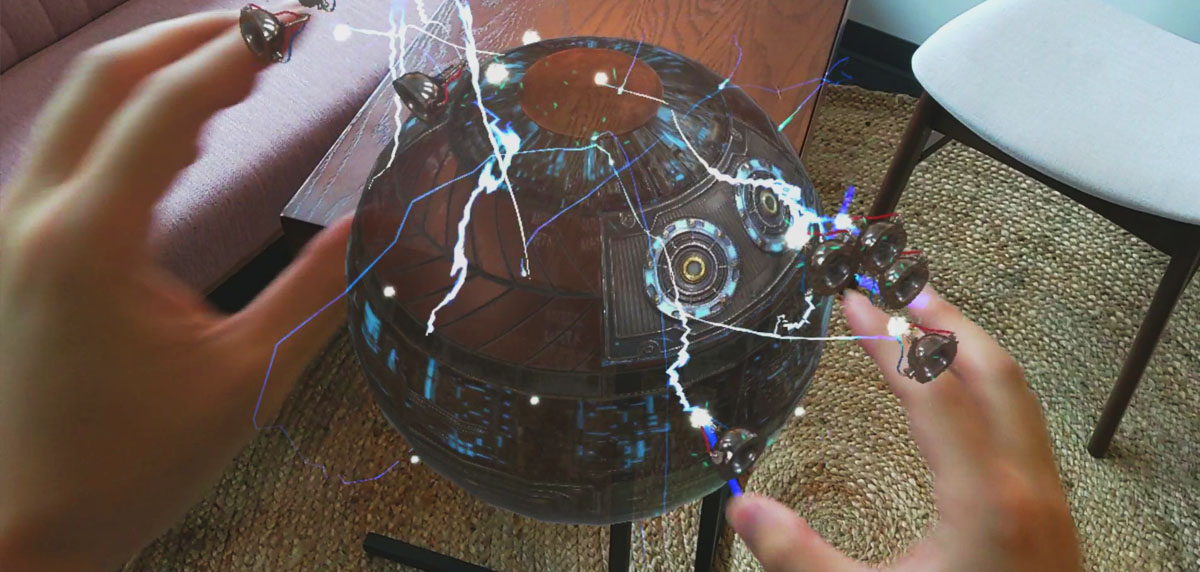

| Periodic Table of the Elements is an open-source sample app which demonstrates how to use MRTK's input system and building blocks to create an app experience for HoloLens and Immersive headsets. Read the porting story: Bringing the Periodic Table of the Elements app to HoloLens 2 with MRTK v2 | Galaxy Explorer is an open-source sample app that was originally developed in March 2016 as part of the HoloLens 'Share Your Idea' campaign. Galaxy Explorer has been updated with new features for HoloLens 2, using MRTK v2. Read the story: The Making of Galaxy Explorer for HoloLens 2 | Surfaces is an open-source sample app for HoloLens 2 which explores how we can create a tactile sensation with visual, audio, and fully articulated hand-tracking. Check out Microsoft MR Dev Days session Learnings from the Surfaces app for the detailed design and development story. |

Session videos from Mixed Reality Dev Days 2020

See Mixed Reality Dev Days to explore more session videos.

Engage with the community

Join the conversation around MRTK on Slack. You can join the Slack community via the automatic invitation sender.

Ask questions about using MRTK on Stack Overflow using the MRTK tag.

Search for known issues or file a new issue if you find something broken in MRTK code.

For questions about contributing to MRTK, go to the mixed-reality-toolkit channel on slack.

This project has adopted the Microsoft Open Source Code of Conduct. For more information, see the Code of Conduct FAQ or contact opencode@microsoft.com with any additional questions or comments.

Useful resources on the Mixed Reality Dev Center

| Learn to build mixed reality experiences for HoloLens and immersive headsets (VR). | Get design guides. Build user interface. Learn interactions and input. | Get development guides. Learn the technology. Understand the science. | Get your app ready for others and consider creating a 3D launcher. |

Useful resources on Azure

Spatial Anchors |

||

|---|---|---|

| Spatial Anchors is a cross-platform service that allows you to create Mixed Reality experiences using objects that persist their location across devices over time. | Discover and integrate Azure powered speech capabilities like speech to text, speaker recognition or speech translation into your application. | Identify and analyze your image or video content using Vision Services like computer vision, face detection, emotion recognition or video indexer. |

Learn more about the MRTK project

You can find our planning material on our wiki under the Project Management Section. You can always see the items the team is actively working on in the Iteration Plan issue.

How to contribute

Learn how you can contribute to MRTK at Contributing.

For details on the different branches used in the Mixed Reality Toolkit repositories, check this Branch Guide here.